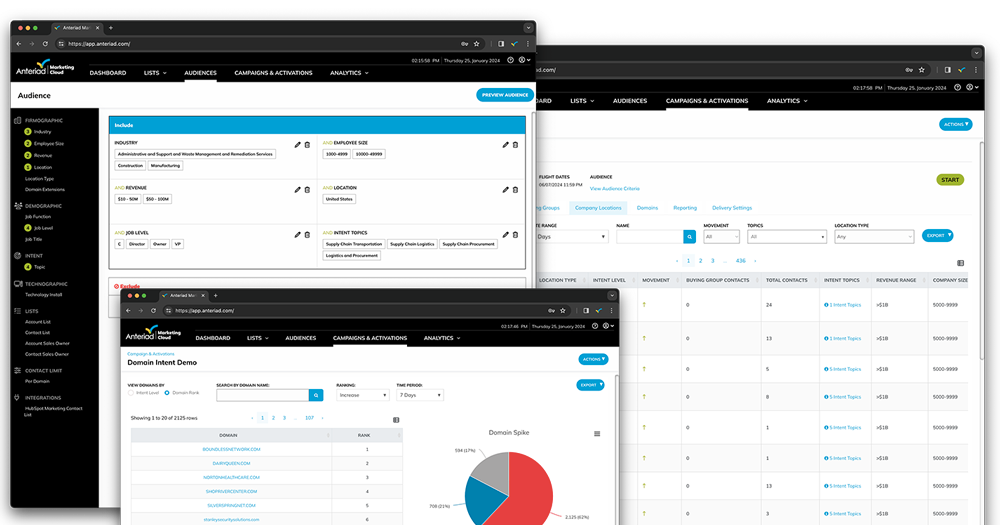

Define, find, and convert your ideal customers faster so you can hit goals, grow pipeline, and prove ROI.

Our global B2B data powers:

Full funnel marketing to power your pipeline

32X

closed, won business

Data is at the core of everything we do.

Certified as a top 5 data provider by Neutronian, we're in the elite top 1% for quality and transparency. Our global, privacy-compliant data is key to driving customer acquisition and AI-powered solutions. Elevate your ABM strategies with our Neutronian-certified, industry-leading B2B data.